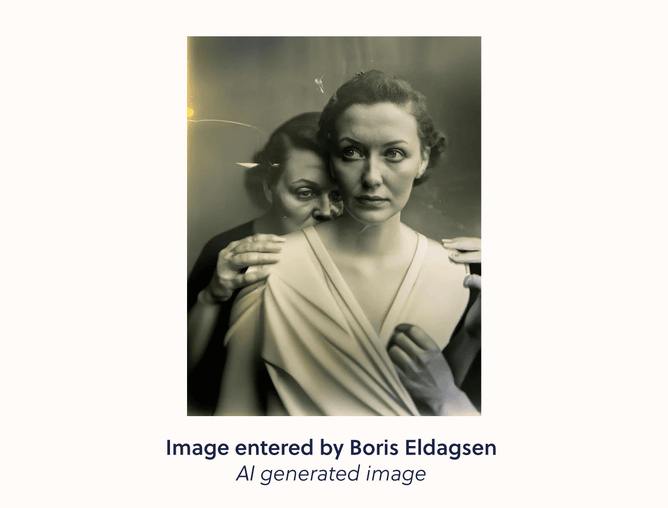

Chances are you that read about the controversial AI image that won this year's Sony World Photography Awards open category. German Photographer Boris Eldagsen submitted the image under the guise of being a regular photograph as a test to see if judges would be able to distinguish AI from reality. The joke is not only were they blind to the AI but they awarded it top prize. Eldagsen’s controversy did raise some serious questions about the ethics of AI and image enhancement or manipulation, leaving many designers scratching their heads as to what the future will look like.

Designers innately know the power of great visuals, film, photography and art prove just how influential the visual medium can be. This is called “visual persuasion”, making us feel deeply, laugh, cry and think critically about a topic. During their careers, most designers will work for a business that wants to influence their customers through visual persuasion. Yet there is an elephant in the room when it comes to using images to influence people, and that is ethics, particularly around image retouching.

Image retouching goes hand in hand with visual persuasion, from photo-shopped models to deceptively good-looking Big Macs. It is an ethical concern across the globe, as people are manipulated by unrealistic imagery. As a designer, it’s to be expected that a client will call on you to retouch images at some point, so it’s important to understand the ethics and history of the practice.

Retouching isn’t new, we’ve been doing it since the 1860s, when people manually touched up pictures with painting techniques. As technology evolved so did the scale of what could be done, eventually, the arrival of Photoshop changed everything - creating the culture of retouching we know today. Image retouching is often seen as negative, largely due to the unrealistic body standards impressed upon women. Queue the Photoshop Law and The Truth in Advertising Act, mandating that brands label retouched images in retaliation to visual manipulation in the beauty industry.

Designers can learn from this push-back just how important it is to consumers that they have transparency. Ultimately people don’t like or trust brands that mislead them, and the business savvy among us know that trust builds reputation and reputation builds success. You need to look no further than the cult-like trust people have in Apple products to see reputation done well or watch Succession to understand how much Board members care about public perception.

The burgeoning field of AI retouching in particular highlights the need for transparency, and begs the question - when as designers are we going too far? There is a fine line between touching up images and deceiving audiences with unrealistic portrayals of something. Generative AI has come into its own in the past year, with the likes of ChatGPT and Midjourney rapidly transforming how we live and work. But as with any technology that develops at a pace faster than regulatory bodies can keep up with, there is always risk.

Adobe’s Vice President and Chief Trust Officer Dana Rao said “We’re at a tipping point where AI is going to break trust in what you see and hear — and democracies can’t survive when people don’t agree on facts. You have to have a baseline of understanding of facts.” The consensus is that businesses or organisations have an ethical responsibility to let people know when they are using AI to generate content.

As a designer, we recommend having a critical hat on when re-touching images, especially with the array of new shiny AI tools to streamline our work. From Adobe’s new Sensei feature, to Let’s Enhance, and Lumen5, there are tools to enhance your offering as a designer and boundless and only improving. Our advice however is to always maintain a granularity or realness to the images you’re enhancing, and disclaim if your images have surpassed reality.

Power lies in narratives, and images help to create those. Yes, generative AI can be used to create emotionally evocative human rights images for a worthy cause but it could also be used to influence people politically or for purely financial benefit and other’s detriment.

The discussion around generating AI images isn’t all about policing nefarious behaviour, however. Sure this technology comes with some massive risks, but there is no denying how awesome its power really is.

If designers understand the potential consequences of misleading visual representations on consumer trust they can use this technology to enhance creative practices without having negative impacts on consumers. It’s all about balance!